How to build a generative AI model for image synthesis?

Artificial intelligence has made great strides in the area of content generation. From translating straightforward text instructions into images and videos to creating poetic illustrations and even 3D animation, there is no limit to AI’s capabilities, especially in terms of image synthesis. And with tools like Midjourney and DALL-E, the process of image synthesis has become simpler and more efficient than ever before. But what makes these tools so capable? The power of generative AI! Generative AI models for image synthesis are becoming increasingly important for both individual content creators and businesses. These models use complex algorithms to generate new images that are similar to the input data they are trained on. Generative AI models for image synthesis can quickly create high-quality, realistic images, which is difficult or impossible to achieve through traditional means. In fields such as art and design, generative AI models are being used to create stunning new artworks and designs that push the boundaries of creativity. In medicine, generative AI models for image synthesis are used to generate synthetic medical images for diagnostic and training purposes, allowing doctors to understand complex medical conditions better and improve patient outcomes. In addition, generative AI models for image synthesis are also being used to create more realistic and immersive virtual environments for entertainment and gaming applications. In fact, the ability to generate high-quality, realistic images using generative AI models is causing new possibilities for innovation and creativity to emerge across industries.

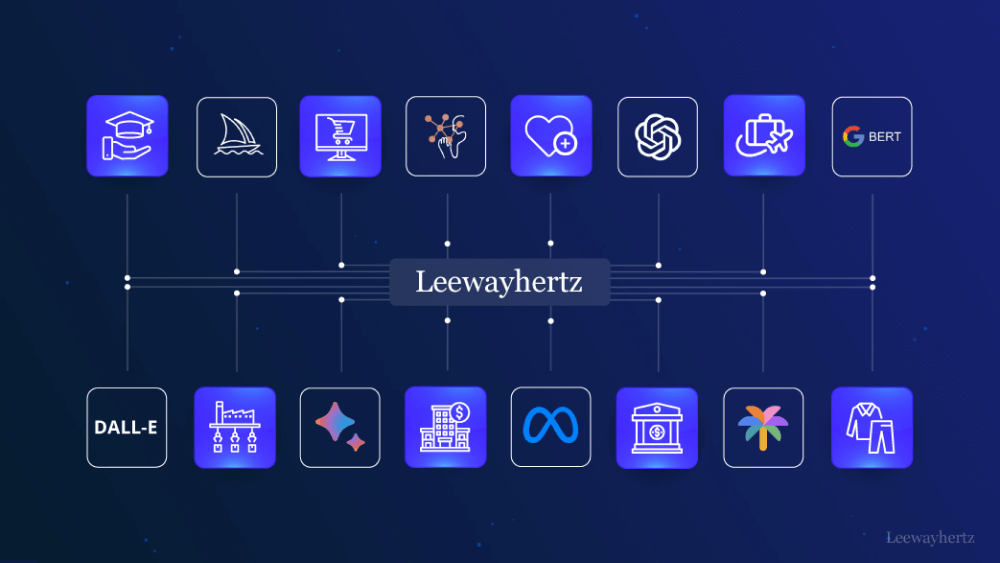

In this article, we discuss generative AI models for image synthesis, their importance, use cases and more.

- What are generative AI models?

- Understanding image synthesis and its importance

- Types of generative AI models for image synthesis

- Choosing the right dataset for your model

- Preparing data for training

- Building a generative AI model using GANs (Generative Adversarial Networks)

- Generating new images with your model

- Applications of generative AI models for image synthesis

What are generative AI models?

Generative AI models are a class of machine learning algorithms capable of producing fresh content from patterns learned from massive training datasets. These models use deep learning techniques to learn patterns and features from the training data and use that knowledge to create new data samples.

Generative AI models have a wide range of applications, such as generating images, text, code and even music. One of the most popular types of generative AI models is the Generative Adversarial Network (GAN), which consists of two neural networks: a generator network that creates new data samples and a discriminator network that evaluates whether the generated samples are real or fake.

Generative AI models have the potential to revolutionize various industries, such as entertainment, art, and fashion, by enabling the creation of novel and unique content quickly.

Understanding image synthesis and its importance

Generative models are a type of artificial intelligence that can create new images that are similar to the ones they were trained on. This technique is known as image synthesis, and it is achieved through the use of deep learning algorithms that learn patterns and features from a large database of photographs. These models are capable of correcting any missing, blurred or misleading visual elements in the images, resulting in stunning, realistic and high-quality images.

Generative AI models can even make low-quality pictures appear to have been taken by an expert by increasing their clarity and level of detail. Additionally, AI can merge existing portraits or extract features from any image to create synthetic human faces that look like real people.

The value of generative AI in image synthesis lies in its ability to generate new, original images that have never been seen before. This has significant implications for various industries, including creative, product design, marketing, and scientific fields, where it can be used to create lifelike models of human anatomy and diseases.

The most commonly used generative models in image synthesis include variational autoencoder (VAE), autoregressive models, and generative adversarial networks (GANs).

Types of generative AI models for image synthesis

Images may be synthesized using a variety of generative AI models, each of which has its own advantages and disadvantages. Here, we will discuss some of the most popular generative AI model types used for picture synthesis.

Generative Adversarial Networks (GANs)

GAN, or Generative Adversarial Network, is a popular and effective type of generative AI model used for creating images. GAN consists of two neural networks: a generator network and a discriminator network. The generator network creates new images, while the discriminator network determines if the images created by the generator are real or fake.

During the training process, the two networks are trained in parallel, in a technique known as adversarial training. The generator tries to trick the discriminator, while the discriminator tries to distinguish between real and fake images. As a result, the generator learns to create images that are increasingly realistic and difficult for the discriminator to identify as fake.

GANs have demonstrated remarkable success in producing high-quality and realistic images in various applications such as computer vision, video game design, and painting. They are capable of working with complex image structures and producing images with intricate features such as textures and patterns that other models may struggle to depict.

However, GANs require significant training to deliver high-quality results, which can be challenging. Despite these difficulties, GANs continue to be a widely used and successful method for image synthesis across various industries.

Variational Autoencoders (VAEs)

VAE, or Variational Autoencoder, is another type of generative AI model used for picture synthesis. VAEs are networks that consist of an encoder and a decoder. The encoder learns a compressed representation of an input image, also known as latent space, and the decoder uses this compressed representation to generate new images that are identical to the input image.

When combined with other methods like adversarial training, VAEs have shown promising outcomes in creating high-quality images. They are capable of generating graphics with intricate features such as textures and patterns, and can manage complicated visuals. Additionally, the encoding and decoding processes used by VAEs have a probabilistic component, which enables them to produce a wide range of new pictures from a single input image.

However, unlike GANs, VAEs may have difficulty in producing extremely realistic pictures. They also take longer to produce images since each new image needs to be encoded and decoded. Despite these drawbacks, VAEs continue to be a widely used method for image synthesis and have shown effectiveness in various applications such as computer graphics and medical imaging.

Autoregressive models

Autoregressive models are a type of generative AI model used for image creation, where the model starts with a seed image and creates new images pixel by pixel. The model predicts the value of the next pixel based on the values of the preceding pixels. While autoregressive models can create high-quality photos with intricate details, they produce new images relatively slowly because each pixel must be generated separately.

Despite this limitation, autoregressive models have demonstrated effectiveness in producing high-quality images with fine details and complex structures, particularly in applications such as picture inpainting and super-resolution. However, compared to GANs, autoregressive models may have difficulty producing extremely realistic images.

Despite these drawbacks, autoregressive models are still a popular technique for image synthesis in a variety of fields, including computer vision, medical imaging, and natural language processing. Additionally, improvements in design and training techniques continue to enhance the performance of autoregressive models for image synthesis.

Launch your project with LeewayHertz

Produce hyperrealistic images with our powerful image synthesis AI models

Choosing the right dataset for your model

Generative AI models rely heavily on the dataset they are trained on to generate high-quality, diverse images. To achieve this, the dataset should be large enough to represent the richness and variety of the target picture domain, ensuring that the generative model can learn from a wide range of examples. For example, if the goal is to create medical images, the dataset should contain a diverse range of medical photos capturing various illnesses, organs, and imaging modalities.

In addition to size and diversity, the dataset should also be properly labeled to ensure that the generative model learns the correct semantic properties of the photos. This means that each image in the dataset should be accurately labeled, indicating the object or scene depicted in the picture. Both manual and automated labeling methods can be used for this purpose.

Finally, the quality of the dataset is also important. It should be free of errors, artifacts, and biases to ensure that the generative model learns accurate and unbiased representations of the picture domain. For instance, if the dataset has biases towards certain objects or features, the generative model may learn to replicate these biases in the generated images.

Selecting the right dataset is critical for the success of generative AI models for image synthesis. A suitable dataset should be large, diverse, properly labeled, and of high quality to ensure that the generative model can learn accurate and unbiased representations of the target picture domain.

Preparing data for training

Preparing data for training a generative AI model used for image synthesis involves collecting the data, preprocessing it, augmenting it, normalizing it, and splitting it into training, validation, and testing sets. Each step is crucial in ensuring that the model can learn the patterns and features of the data correctly, leading to more accurate image synthesis.

There are several phases involved in getting data ready for generative AI model training so that the model can accurately learn the patterns and properties of the data.

Data collection: This is the initial stage in gathering the data needed to train a generative AI model for picture synthesis. The model’s performance may be significantly impacted by the type and volume of data gathered. The data may be gathered from a variety of places, including web databases, stock picture archives, and commissioned photo or video projects.

Data preprocessing: Preprocessing involves a series of operations performed on the raw data to make it usable and understandable by the model. In the context of image data, preprocessing typically involves cleaning, resizing, and formatting the images to a standard that the model can work with.

Data augmentation: It involves making various transformations to the original dataset to artificially create additional examples for training the model. It can help expand the range of the data used to train the model. This can be especially important when working with a limited dataset, as it allows the model to learn from a greater variety of examples, which can improve its ability to generalize to new, unseen examples. Data augmentation can help prevent overfitting, a common problem in machine learning. Overfitting occurs when a model becomes too specialized to the training data, to the point that it performs poorly on new, unseen data.

Data normalization: Data normalization, entails scaling the pixel values to a predetermined range, often between 0 and 1. Normalization helps to avoid overfitting by ensuring that the model can learn the patterns and characteristics of the data more quickly.

Dividing the data: Training, validation, and testing sets are created from the data. The validation set is used to fine-tune the model’s hyperparameters, the testing set is used to assess the model’s performance, and the training set is used to train the model. Depending on the size of the dataset, the splitting ratio can change, but a typical split is 70% training, 15% validation, and 15% testing.

Building a generative AI model using GANs (Generative Adversarial Networks)

Creating a generative AI model for image synthesis using GAN entails carefully gathering and preprocessing the data, defining the architecture of the generator and discriminator networks, training the GAN model, tracking the training process, and assessing the performance of the trained model.

Here are the steps discussed in detail:

- Gather and prepare the data: The data must be cleaned, labeled, and preprocessed to ensure that it is suitable for the model’s training.

- Define the architecture of the generator and discriminator networks: The generator network creates images using a random noise vector as input, while the discriminator network tries to differentiate between the generated images and the real images from the dataset.

- Train the GAN model: The generator and discriminator networks are trained concurrently, with the generator attempting to deceive the discriminator by producing realistic images and the discriminator attempting to accurately differentiate between the generated and real images.

- Monitor the training process: Keep an eye on the produced images and the loss functions of both networks to ensure that the generator and discriminator networks are settling on a stable solution. Tweaking the hyperparameters can help to improve the results.

- Test the trained GAN model: Use a different testing set to evaluate the performance of the trained GAN model by creating new images and comparing them to the real images in the testing set. Compute several metrics to evaluate the model’s performance.

- Fine-tune the model: Adjust the model’s architecture or hyperparameters, or retrain it on new data to improve its performance.

- Deploy the model: Once the model has been trained and fine-tuned, it can be used to generate images for a variety of applications.

Creating a GAN model for image synthesis requires careful attention to data preparation, model architecture, training, testing, fine-tuning, and deployment to ensure that the model can generate high-quality and realistic images.

Generating new images with your model

As discussed earlier, a GAN model consists of two networks: the generator and the discriminator. The generator network takes a random noise vector as input and generates an image that is intended to look like a real image. The discriminator network’s task is to determine whether an image is real or fake, i.e., generated by the generator network.

During training, the generator network produces fake images, and the discriminator network tries to distinguish between the real and fake images. The generator network learns to produce better fake images by adjusting its parameters to fool the discriminator network. This process continues until the generator network produces images that are indistinguishable from real images.

Once the GAN model is trained, new images can be generated by providing a random noise vector to the generator network. By adjusting the noise input, interpolating between two images, or applying style transfer, the generator network can be fine-tuned to produce images in a particular style.

However, it’s important to note that the GAN model’s capacity to produce high-quality images may be limited. Therefore, it’s crucial to assess the produced images’ quality using various metrics, such as visual inspection or automated evaluation metrics. If the quality of the generated images is not satisfactory, the GAN model can be adjusted, or more training data can be provided to improve the outcomes.

To ensure that the produced images look realistic and of excellent quality, post-processing methods like picture filtering, color correction, or contrast adjustment can be used. The images generated using the GAN model can be used for various applications, such as art, fashion, design, and entertainment.

Launch your project with LeewayHertz

Produce hyperrealistic images with our powerful image synthesis AI models

Applications of generative AI models for image synthesis

There are several uses for generative AI models, especially GANs, in picture synthesis. The following are some of the main applications of generative AI models for picture synthesis:

Art and design: New works of art and design, such as paintings, sculptures, and even furniture, may be produced using generative AI models. For instance, artists can create new patterns, textures, or colour schemes for their artwork using GANs.

Gaming: Realistic gaming assets, such as people, locations, or items, can be created using GANs. This can improve the aesthetic appeal of games and provide gamers with a more engaging experience.

Fashion: Custom clothing, accessory, or shoe designs can be created with generative AI models for image synthesis. For apparel designers and retailers, this may open up fresh creative opportunities.

Animation and film: GANs may be used to create animation, visual effects, or even whole scenes for movies and cartoons. By doing this, developing high-quality visual material may be done faster and cheaper.

X-rays, MRIs, and CT scans are just a few examples of the kinds of medical pictures that may be produced with GANs. This can help with medical research, treatment planning, and diagnosis.

GANs may also be used in photography to create high-quality photos from low-resolution ones. This can improve the quality of pictures shot using cheap cameras or mobile devices.

Essentially, there is a plethora of ways in which generative AI models may be used for picture synthesis. They may be utilized to develop new works of art and design, improve games, manufacture original clothing designs, create stunning visual effects, support medical imaging, and more.

Endnote

Developing a generative AI model for picture synthesis necessitates a thorough comprehension of machine learning ideas, including deep neural networks, loss functions, and optimization strategies. The benefits of developing such models, however, are substantial because they have a wide range of uses in industries including art, fashion, and entertainment.

From data collection and preprocessing through training and testing the model, the main phases in creating a generative AI model for picture synthesis have been covered in the article. We have also discussed the advantages and disadvantages of several generative models, such as GANs and VAEs.

The importance of choosing the appropriate architecture and hyperparameters for the model, the significance of data quality and quantity, and the necessity of ongoing model performance monitoring are other important areas we have covered.

In conclusion, developing a generative AI model for picture synthesis requires a blend of technical proficiency, originality, and in-depth knowledge of the technologies involved.

Build future-ready generative AI models for image synthesis. Contact LeewayHertz for consultation and the next project development!

Start a conversation by filling the form

All information will be kept confidential.