How to build a GPT model?

GPT models are a collection of deep learning-based language models created by the OpenAI team. Without supervision, these models can perform various NLP tasks like question-answering, textual entailment, text summarization, etc. These language models require very few or no examples to understand tasks. They perform equivalent to or even better than state-of-the-art models trained in a supervised fashion.

The GPT series from OpenAI has radically transformed the landscape of artificial intelligence. The latest addition to the series, GPT-4, has further expanded the horizons for AI applications. This article will take you on a journey through the innovative realm of GPT-4. We’ll delve into its notable advancements of GPT models while exploring how this state-of-the-art model is reshaping our interactions with AI across diverse sectors.

This article deeply delves into all aspects of GPT models and discusses the steps required to build a GPT model from scratch.

- What is a GPT model?

- Overview of GPT models

- Use cases of GPT models

- Advantages of building GPT models

- Working mechanism of GPT models

- How to choose the right GPT model for your needs?

- Prerequisites to build a GPT model

- How to create a GPT model? – Steps for building a GPT model

- How to train an existing GPT model with your data?

- Leverage LeewayHertz’s AI development services to build a GPT model

- Things to consider while building a GPT model

- The future of custom GPTs

What is a GPT model?

GPT stands for Generative Pre-trained Transformer, the first generalized language model in NLP. Previously, language models were only designed for single tasks like text generation, summarization or classification. GPT is the first generalized language model ever created in the history of natural language processing that can be used for various NLP tasks. Now let us explore the three components of GPT, namely Generative, Pre-Trained, and Transformer and understand what they mean.

Generative: Generative models are statistical models used to generate new data. These models can learn the relationships between variables in a data set to generate new data points similar to those in the original data set.

Pre-trained: These models have been pre-trained using a large data set which can be used when it is difficult to train a new model. Although a pre-trained model might not be perfect, it can save time and improve performance.

Transformer: The transformer model, an artificial neural network created in 2017, is the most well-known deep learning model capable of handling sequential data such as text. Many tasks like machine translation and text classification are performed using transformer models.

GPT can perform various NLP tasks with high accuracy depending on the large datasets it was trained on and its architecture of billion parameters, allowing it to understand the logical connections within the data. GPT models, like the latest version GPT-3, have been pre-trained using text from five large datasets, including Common Crawl and WebText2. The corpus contains nearly a trillion words, allowing GPT-3 to perform NLP tasks quickly and without any examples of data.

Overview of GPT models

GPT models, short for Generative Pretrained Transformers, are advanced deep learning models designed for generating human-like text. These models, developed by OpenAI, have seen several iterations: GPT-1, GPT-2, GPT-3, and most recently, GPT-4.

Introduced in 2018, GPT-1 was the first in this series, using a unique Transformer architecture to vastly improve language generation capabilities. It was built with 117 million parameters and trained on a mix of datasets from Common Crawl and BookCorpus. GPT-1 could generate fluent and coherent language given some context. However, it had limitations, including the tendency to repeat text and difficulties with complex dialogue and long-term dependencies.

OpenAI then released GPT-2 in 2019. This model was much larger, with 1.5 billion parameters, and was trained on an even larger and diverse dataset. Its main strength was the ability to generate realistic text sequences and human-like responses. However, GPT-2 struggled with maintaining context and coherence over longer passages.

The introduction of GPT-3 in 2020 marked a huge leap forward. With a staggering 175 billion parameters, GPT-3 was trained on vast datasets and could generate nuanced responses across various tasks. It could generate text, write code, create art, and more, making it a valuable tool for many applications like chatbots and language translation. However, GPT-3 wasn’t perfect and had its share of biases and inaccuracies.

Following GPT-3, OpenAI introduced an upgraded version, GPT-3.5, and eventually released GPT-4 in March 2023. GPT-4 is the latest and most advanced of OpenAI’s language models which is multi modal. It can generate more accurate statements and handle images as inputs, allowing for captions, classifications, and analyses. GPT-4 also showcases creative capabilities like composing songs or writing screenplays. It comes in two variants, differing in their context window size: gpt-4-8K and gpt-4-32K.

GPT-4’s ability to understand complex prompts and demonstrate human-like performance on various tasks is a significant leap forward. Yet, as with all powerful tools, there are valid concerns about potential misuse and ethical implications. It’s crucial to keep these factors in mind when exploring the capabilities and applications of GPT models.

Use cases of GPT models

GPT models are known for their versatile applications, providing immense value in various sectors. Here, we will discuss three key use cases: Understanding Human Language, Content Generation for UI Design, and Applications in Natural Language Processing.

Understanding human language using NLP

GPT models are instrumental in enhancing the computer’s ability to understand and process human language. This encompasses two main areas:

- Human Language Understanding (HLU): HLU refers to the machine’s ability to comprehend the meaning of sentences and phrases, effectively translating human knowledge into machine-readable format. This is achieved using deep neural networks or feed-forward neural networks and involves a complex mix of statistical, probabilistic, decision tree, fuzzy set, and reinforcement learning techniques. Developing models in this area is challenging and requires substantial expertise, time, and resources.

- Natural Language Processing (NLP): NLP focuses on interpreting and analyzing written or spoken human language. It involves training computers to understand language, rather than programming them with pre-set rules or instructions. Key applications of NLP include information retrieval, classification, summarization, sentiment analysis, document generation, and question answering. It also plays a pivotal role in data mining, sentiment analysis, and computational tasks.

Generating content for user interface design

GPT models can be employed to generate content for user interface design. For example, they can assist in creating web pages where users can upload various forms of content with just a few clicks. This ranges from adding basic elements like captions, titles, descriptions, and alt tags, to incorporating interactive components like buttons, quizzes, and cards. This automation reduces the need for additional development resources and investment.

Applications in computer vision systems for image recognition

GPT models are not only limited to processing text. When combined with computer vision systems, they can perform tasks such as image recognition. These systems can identify and remember specific elements within an image, like faces, colors, and landmarks. GPT-3, with its transformer architecture, can handle such tasks effectively.

Enhancing customer support with AI-powered chatbots

GPT models are revolutionizing customer support by powering AI chatbots. These chatbots, armed with GPT-4, can understand and respond to customer queries with increased precision. They can simulate human-like conversations, providing detailed responses, and instant support around the clock. This significantly enhances customer service by providing quick, accurate responses, leading to improved customer satisfaction and loyalty.

Bridging language barriers with accurate translation

Language translation is another area where GPT-4 excels. Its advanced language understanding capabilities enable it to translate text between various languages accurately. GPT-4 can grasp the nuances of different languages and provide translations that retain the original meaning and context. This feature can be incredibly useful in facilitating cross-cultural communication and making information accessible to a global audience.

Streamlining code generation

GPT-4’s ability to understand and generate programming language code has made it a valuable tool for developers. It can produce code snippets based on a developer’s input, significantly speeding up the coding process and reducing the chance of errors. By understanding the context and nuances of different programming languages, GPT-4 can assist in more complex coding tasks, thus contributing to more efficient and streamlined software development.

Transforming education with personalized tutoring

The education sector can greatly benefit from the implementation of GPT-4. It can generate educational content tailored to a learner’s needs, providing personalized tutoring and learning assistance. From explaining complex concepts in a simple manner to providing support with homework, GPT-4 can make learning more engaging and accessible. Its ability to adapt to different learning styles and pace can contribute to a more personalized and effective learning experience.

Assisting in creative writing

In the realm of creative writing, GPT-4 can be an invaluable assistant. It can provide writers with creative suggestions, help overcome writer’s block, and even generate entire stories or poems. By understanding the context and maintaining the flow of the narrative, GPT-4 can produce creative pieces that are coherent and engaging. This can be a valuable tool for writers, stimulating creativity, and enhancing productivity.

Advantages of building GPT models

Building GPT (Generative Pre-trained Transformer) models offers numerous advantages that can transform natural language processing (NLP) and elevate the quality and efficiency of language generation tasks. Here are some key advantages of building GPT models:

Versatility and adaptability

GPT models are highly versatile and adaptable, capable of being fine-tuned for specific tasks and domains. This flexibility enables developers and researchers to leverage the power of GPT models across a wide range of NLP applications, including sentiment analysis, text classification, language translation, and more.

Language creativity

GPT models can generate creative and novel text. With their extensive exposure to diverse language patterns and structures during pre-training, GPT models can produce unique and imaginative responses, making them invaluable in creative writing tasks and generating innovative content.

Natural language generation

GPT models excel in generating human-like text, making them highly effective for applications such as chatbots, content generation, and creative writing. By understanding the context and semantics of the input text, GPT models produce coherent and contextually relevant responses, enhancing the overall user experience.

Natural language processing capabilities

GPT models excel in handling NLP tasks with remarkable accuracy and efficiency. The unique combination of deep learning algorithms and vast amounts of training data enables them to understand context and recognize patterns. This capability makes them powerful tools for NLP applications like chatbots, language translation, and question-answering systems.

Contextual understanding

GPT models possess a strong grasp of contextual understanding due to their pre-training on vast amounts of unlabeled data. This allows the models to capture the nuances of language and generate responses that align with the given context, resulting in more accurate and meaningful outputs.

Continual learning and improvement

GPT models can be further fine-tuned and updated as new labeled data becomes available. This continual learning and improvement process allows the models to adapt to evolving language patterns and stay up to date with the latest trends and contexts, ensuring their relevance and accuracy over time.

Efficient training

One of the standout benefits of GPT models is their efficient training process. Unlike other AI models, GPT models have significantly faster training times, enabling quicker completion and deployment of projects. This is especially important for businesses that work on time- and resource-sensitive projects. GPTs ensure efficiency thanks to their advanced architecture and the vast amounts of data used to train them, saving valuable time and resources.

Cost and resource effectiveness

GPT models offer high performance at a relatively low cost, making them an attractive option for various businesses. They provide a better cost-performance ratio compared to other AI models without compromising quality, which is essential for businesses looking to reduce their resource and computational costs.

Superior performance

GPT models have a proven track record of delivering superior performance compared to other models. In several benchmark tests, they have outperformed their counterparts, making GPT models a preferred choice for businesses seeking accurate and reliable AI solutions. This ensures better and more successful project outcomes and customer satisfaction.

Improved accuracy

Accuracy is a key benefit of using GPT models. They help make accurate predictions and decisions due to their training on large datasets. GPT models achieve this by understanding patterns and relationships within the data. Businesses rely on the accuracy of outputs as they make business and investment decisions based on GPT responses. Enhanced accuracy leads to increased efficiency and productivity, as AI-powered systems provide more relevant and useful results, saving time, effort, and resources.

Working mechanism of GPT models

GPT is an AI language model based on transformer architecture that is pre-trained, generative, unsupervised, and capable of performing well in zero/one/few-shot multitask settings. It predicts the next token (an instance of a sequence of characters) from a sequence of tokens for NLP tasks, it has not been trained on. After seeing only a few examples, it can achieve the desired outcomes in certain benchmarks, including machine translation, Q&A and cloze tasks. GPT models calculate the likelihood of a word appearing in a text given that it appears in another text primarily based on conditional probability. For example, in the sentence, “Margaret is organizing a garage sale…Perhaps we could purchase that old…” the word chair is more likely appropriate than the word ‘elephant’. Also, transformer models use multiple units called attention blocks that learn which parts of a text sequence to be focused on. One transformer might have multiple attention blocks, each learning different aspects of a language.

A transformer architecture has two main segments: an encoder that primarily operates on the input sequence and a decoder that operates on the target sequence during training and predicts the next item. For example, a transformer might take a sequence of English words and predict the French word in the correct translation until it is complete.

The encoder determines which parts of the input should be emphasized. For example, the encoder can read a sentence like “The quick brown fox jumped.” It then calculates the embedding matrix (embedding in NLP allows words with similar meanings to have a similar representation) and converts it into a series of attention vectors. Now, what is an attention vector? You can view an attention vector in a transformer model as a special calculator, which helps the model understand which parts of any given information are most important in making a decision. Suppose you have been asked multiple questions in an exam that you must answer using different information pieces. The attention vector helps you to pick the most important information to answer each question. It works in the same way in the case of a transformer model.

The multi-head attention block initially produces these attention vectors. They are then normalized and passed into a fully connected layer. Normalization is again done before being passed to the decoder. During training, the encoder works directly on the target output sequence. Let us say that the target output is the French translation of the English sentence “The quick brown fox jumped.” The decoder computes separate embedding vectors for each French word of the sentence. Additionally, the positional encoder is applied in the form of sine and cosine functions. Also, masked attention is used, which means that the first word of the French sentence is used, whereas all other words are masked. This allows the transformer to learn to predict the next French words. These outputs are then added and normalized before being passed on to another attention block which also receives the attention vectors generated by the encoder.

Alongside, GPT models employ some data compression while consuming millions upon millions of sample texts to convert words into vectors which are nothing but numerical representations. The language model then unpacks the compressed text into human-friendly sentences. The model’s accuracy is improved by compressing and decompressing text. This also allows it to calculate the conditional probability of each word. GPT models can perform well in “few shots” settings and respond to text samples that have been seen before. They only require a few examples to produce pertinent responses because they have been trained on many text samples.

Besides, GPT models have many capabilities, such as generating unprecedented-quality synthetic text samples. If you prime the model with an input, it will generate a long continuation. GPT models outperform other language models trained on domains such as Wikipedia, news, and books without using domain-specific training data. GPT learns language tasks such as reading comprehension, summarization and question answering from the text alone, without task-specific training data. These tasks’ scores (“score” refers to a numerical value the model assigns to represent the likelihood or probability of a given output or result) are not the best, but they suggest unsupervised techniques with sufficient data and computation that could benefit the tasks.

Here is a comprehensive comparison of GPT models with other language models.

|

Feature

|

GPT

|

BERT (Bidirectional Encoder Representations from Transformers)

|

ELMo (Embeddings from Language Models)

|

|---|---|---|---|

| Pretraining approach | Unidirectional language modeling | Bidirectional language modeling (masked language modeling and next sentence prediction) | Unidirectional language modeling |

| Pretraining data | Large amounts of text from the internet | Large amounts of text from the internet | A combination of internal and external corpus |

| Architecture | Transformer network | Transformer network | Deep bi-directional LSTM network |

| Outputs | Context-aware token-level embeddings | Context-aware token-level and sentence-level embeddings | Context-aware word-level embeddings |

| Fine-tuning approach | Multi-task fine-tuning (e.g., text classification, sequence labeling) | Multi-task fine-tuning (e.g., text classification, question answering) | Fine-tuning on individual tasks |

| Advantages | Can generate text, high flexibility in fine-tuning, large model size | Strong performance on a variety of NLP tasks, considering the context in both directions | Generates task-specific features, considers context from the entire input sequence |

| Limitations | Can generate biased or inaccurate text, requires large amounts of data | Limited to fine-tuning and requires task-specific architecture modifications; requires large amounts of data | Limited context and task-specific; requires task-specific architecture modifications |

How to choose the right GPT model for your needs?

Choosing the right GPT model for your project depends on several factors, including the complexity of the tasks you want the model to handle, the type of language you want to generate, and the size of your available dataset.

If you need a model that can generate simple text responses, such as replying to customer inquiries, GPT-1 could be a sufficient choice. It’s capable of accomplishing straightforward tasks without requiring extensive data or computational resources.

However, if your project involves more complex language generation like conducting deep analyses of vast amounts of web content, recommending reading material, or generating stories, then GPT-3 would be a more suitable option. GPT-3 has the capacity to process and learn from billions of web pages, providing more nuanced and sophisticated outputs.

In terms of data requirements, the size of your available dataset should be a key consideration. GPT-3, with its larger capacity for learning, tends to work best with big datasets. If you don’t have large amounts of data available for training, GPT-3 might not be the most efficient choice.

In contrast, GPT-1 and GPT-2 are more manageable models that can be trained effectively with smaller datasets. These versions could be more fitting for projects with limited data resources or for small-scale tasks.

Looking ahead, there’s GPT-4. While details about its specific capabilities and requirements aren’t yet widely available, it’s likely that this newer iteration will offer enhanced performance and may require even larger datasets and more computational resources. Always consider the complexity of your task, your resource availability, and the specific benefits each GPT model offers when choosing the right one for your project.

Prerequisites to build a GPT model

Before starting the process of building a GPT model, several prerequisites are essential to ensure a smooth and successful development journey. Here are the key prerequisites to consider:

- Domain-specific data: Collect or curate a substantial amount of domain-specific data that aligns with the intended application or task. A diverse and relevant dataset is crucial for training a GPT model to generate accurate and contextually appropriate responses.

- Computational resources: Building a GPT model requires significant computational resources, including processing power and memory. Ensure access to robust computing infrastructure or consider utilizing cloud-based solutions to handle the computational demands of training and fine-tuning the model.

- Data preprocessing: Prepare your dataset by performing necessary preprocessing steps such as cleaning, tokenization, and encoding. This ensures the data is in a suitable format for effective training of the GPT model.

- GPU acceleration: Utilize GPU acceleration to speed up the training process. Given the large-scale architecture of GPT models, parallel processing provided by GPUs is essential for significantly reducing training times.

- Fine-tuning strategy: Define a fine-tuning strategy to adapt the pre-trained GPT model to your specific task or domain. Identify the appropriate dataset for fine-tuning and establish the parameters and hyperparameters required for achieving optimal performance.

- Evaluation metrics: Select appropriate evaluation metrics that align with your performance goals for the GPT model. Common metrics include perplexity, BLEU score, or custom domain-specific metrics that measure the quality and coherence of the generated text.

- Expertise in deep learning: Acquire a solid understanding of deep learning concepts, particularly related to sequence-to-sequence models, attention mechanisms, and transformer architectures. Familiarize with the principles underlying GPT models to build and fine-tune them effectively.

- Version control and experiment tracking: Implement a version control system and experiment tracking mechanism to manage iterations, track changes, and maintain records of configurations, hyperparameters, and experimental results.

- Iteration: Building a high-quality GPT model requires patience and a willingness to iterate. Experiment with different architectures, hyperparameters, and training strategies to achieve the desired performance. Continuous experimentation, evaluation, and refinement are crucial for optimizing the model’s performance.

To build a GPT (Generative Pretrained Transformer) model, the following tools and resources are required:

- A deep learning framework, such as TensorFlow or PyTorch, to implement the model and train it on large amounts of data.

- A large amount of training data, such as text from books, articles, or websites to train the model on language patterns and structure.

- A high-performance computing environment, such as GPUs or TPUs, for accelerating the training process.

- Knowledge of deep learning concepts, such as neural networks and natural language processing (NLP), to design and implement the model.

- Tools for data pre-processing and cleaning, such as Numpy, Pandas, or NLTK, to prepare the training data for input into the model.

- Tools for evaluating the model, such as perplexity or BLEU scores, to measure its performance and make improvements.

- An NLP library, such as spaCy or NLTK, for tokenizing, stemming and performing other NLP tasks on the input data.

Besides, you need to understand the following deep learning concepts to build a GPT model:

- Neural networks: As GPT models implement neural networks, you must thoroughly understand how they work and their implementation techniques in a deep learning framework.

- Natural language Processing (NLP): For GPT modeling processes, tokenization, stemming, and text generation, NLP techniques are widely used. So, it is necessary to have a fundamental understanding of NLP techniques and their applications.

- Transformers: GPT models work based on transformer architecture, so understanding it and its role in language processing and generation is important.

- Attention mechanisms: Knowledge of how attention mechanisms work is essential to enhance the performance of the GPT model.

- Pretraining: It is essential to apply the concept of pretraining to the GPT model to improve its performance on NLP tasks.

- Generative models: Understanding the basic concepts and methods of generative models is essential to understand how they can be applied to build your own GPT model.

- Language modeling: GPT models work based on large amounts of text data. So, a clear understanding of language modeling is required to apply it for GPT model training.

- Optimization: An understanding of optimization algorithms, such as stochastic gradient descent, is required to optimize the GPT model during training.

Alongside this, you need proficiency in any of the following programming languages with a solid understanding of programming concepts, such as object-oriented programming, data structures, and algorithms, to build a GPT model.

- Python: The most commonly used programming language in deep learning and AI. It has several libraries, such as TensorFlow, PyTorch, and Numpy, used for building and training GPT models.

- R: A popular programming language for data analysis and statistical modeling, with several packages for deep learning and AI.

- Julia: A high-level, high-performance programming language well-suited for numerical and scientific computing, including deep learning.

How to create a GPT model? A step-by-step guide

In this section, with code snippets, we will show steps to build a GPT (Generative Pre-trained Transformer) model from scratch using the PyTorch library and transformer architecture. The code is organized into several sections performing the following tasks sequentially:

- Data preprocessing: The first section of the code preprocesses the input text data by tokenizing it into a list of words, encoding each word into a unique integer, and generating sequences of fixed length using a sliding window approach.

- Model configuration: This section of the code defines the configuration parameters for the GPT model, including the number of transformer layers, the number of attention heads, the size of the hidden layers, and the size of the vocabulary.

- Model architecture: This section of the code defines the architecture of the GPT model using PyTorch modules. The model consists of an embedding layer, followed by a stack of transformer layers, and a linear layer that outputs the probability distribution over the vocabulary for the next word in the sequence.

- Training loop: This section of the code defines the training loop for the GPT model. It uses the Adam optimizer to minimize the cross-entropy loss between the sequence’s predicted and actual next words. The model is trained on batches of data generated from the preprocessed text data.

- Text generation: The final section of the code demonstrates how to use the trained GPT model to generate new text. It initializes the context with a given seed sequence and iteratively generates new words by sampling from the probability distribution output by the model for the next word in the sequence. The generated text is decoded back into words and printed to the console.

Building a GPT model involves the following steps:

1. Importing libraries

The initial step in constructing a GPT model involves importing essential libraries that provide the tools and functionalities required for data manipulation, mathematical computations, and neural network operations. Key libraries typically used include:

- NumPy: For numerical operations and handling arrays, which is crucial for managing data and mathematical functions.

- Pandas: For data manipulation and analysis, enabling efficient reading, processing, and handling of data.

- TensorFlow or PyTorch: For constructing and training the neural network. These frameworks offer flexible and powerful tools for model development.

- Transformers from Hugging Face: For leveraging pre-built transformer models and utilities, which can simplify the development process and enhance model capabilities.

These libraries provide the foundational tools for the GPT model development process, enabling efficient data processing, model building, and training.

2. Defining hyperparameters

The next step is to define various hyperparameters for building a GPT model. Hyperparameters are critical settings that influence the model’s architecture, training and fine-tuning process. Properly tuning these parameters is essential for optimizing model performance. Key hyperparameters to define include:

- Learning rate: Determines the size of the steps taken to update the model weights during training. A higher learning rate speeds up training but can cause instability, while a lower rate ensures stability but might slow down the process.

- Batch size: Refers to the number of training samples processed before updating the model. Larger batches lead to more stable gradients but require more memory.

- Number of layers: Specifies the number of transformer blocks in the model. More layers can enhance the model’s capacity but increase computational demands.

- Number of attention heads: Indicates how many parallel self-attention mechanisms are used. More heads enable the model to focus on various parts of the input sequence simultaneously.

- Hidden size: Defines the dimensionality of the token embeddings and the feedforward network layers, influencing the model’s capacity and performance.

- Block size: Sets the maximum sequence length for context, affecting how much text the model can process at once.

- Evaluation interval: Determines how often the model’s performance is evaluated during training, ensuring timely adjustments.

- Device: Specifies whether to train the model on a CPU or GPU. GPUs generally offer faster training due to their parallel processing capabilities.

- Eval Iters: Defines the number of training iterations before performance evaluation and model checkpointing.

- n_embd (Number of embedding dimensions): Sets the size of the embedding space, affecting how the model represents tokens.

- n_head (Number of attention heads): Specifies the number of attention heads in the multi-head attention mechanism, impacting the model’s ability to capture relationships.

- n_layer (Number of layers): Defines the number of transformer layers in the GPT model.

- Dropout: Indicates the dropout probability used during training to prevent overfitting by randomly dropping neurons.

These hyperparameters guide the model’s structure and training, impacting its performance and efficiency.

3. Reading input file

The input file, usually containing text data, needs to be read and processed. This file could be in various formats such as plain text, CSV, or JSON. Reading the input involves:

- Loading the data: Using libraries like Pandas or native file handling functions to read the text data into a usable format.

- Preprocessing: Removing any unnecessary characters, normalizing text, and possibly tokenizing it into sentences or words creating a vocabulary, depending on the requirements of the GPT model. Once the text data is preprocessed, it can be passed through the GPT model to generate predictions. This prepares the data for encoding and model training.

Preprocessed text is essential for accurate and efficient model training.

4. Identifying unique characters and creating vocabulary

Building a vocabulary from the text data is crucial for the model to process and understand the text.

- Create a list of unique characters: Extract unique characters from the text using functions like set() and convert them to a sorted list. This list forms the vocabulary of the model.

- Determine vocabulary size: The length of this list indicates the number of unique characters, which influences the model’s capacity and complexity. A larger vocabulary allows for more expressive models but also increases training time.

Once the vocabulary is created, the characters in the text data can be mapped to integer values and passed through the GPT model to generate predictions.

5. Creating mapping

The first step is to create a mapping between characters and integers, which is necessary for building a language model such as GPT. For the model to work with text data, it needs to be able to represent each character as a numerical value.

- Tokenization: Breaking down the text into smaller units (tokens).

- Vocabulary creation: Building a vocabulary of unique tokens and assigning each a unique integer ID.

- Mapping tokens: Creating a dictionary that maps each token to its integer ID for encoding the text data.

This mapping allows the model to convert text into a numerical format suitable for training.

6. Encoding input data

Encoding transforms raw text data into numerical format using the token-to-ID mappings created earlier. This process includes:

- Tokenization: Converting text into tokens (words, subwords, or characters) based on the chosen vocabulary.

- Integer encoding: Replacing tokens with their corresponding integer IDs from the vocabulary.

- Padding: Ensuring that all sequences are of equal length by adding special padding tokens as needed.

Encoded data is ready for model training and evaluation.

7. Splitting up the data into train and validation sets

To evaluate the model’s performance, the data needs to be split into training and validation sets:

- Training set: Used to train the model. This set typically constitutes the majority of the data.

- Validation set: Used to evaluate the model’s performance during training. This helps in tuning hyperparameters and preventing overfitting.

This split ensures that the model is evaluated on unseen data and can generalize well.

8. Generating batches of input and target data for training the GPT

During training, data is processed in batches to make the training process more efficient. This involves:

- Creating input sequences: Dividing the encoded data into sequences of a fixed length (block size).

- Batching: Grouping sequences into batches, where each batch contains a fixed number of sequences.

- Input and target preparation: For each batch, the input consists of a sequence of tokens, and the target is the next token in the sequence. This prepares the model to predict the next token given a sequence.

Batches of these input and target sequences are then fed into the model during training to update its weights and improve its performance.

9. Defining one head of the self-attention mechanism in a transformer model

The self-attention mechanism allows the model to focus on different parts of the input sequence when making predictions. A single head of self-attention includes:

- Query, key, and value matrices: These matrices are used to compute attention scores. Each token should be projected into query, key, and value vectors.

- Attention scores: Calculate by taking the dot product of query and key vectors, scaling, and applying softmax to obtain attention weights.

- Weighted sum: Combine value vectors weighted by attention scores to produce the self-attention output.

This mechanism allows the model to capture dependencies between tokens.

10. Implementing the multi-head attention mechanism

The multi-head attention mechanism improves the model’s ability to capture various relationships between tokens by using multiple self-attention heads. This involves:

- Parallel attention heads: Running several self-attention mechanisms in parallel, each with its own set of query, key, and value matrices.

- Concatenation: Combining the outputs of all attention heads.

- Linear transformation: Passing the concatenated output through a linear layer to produce the final attention output.

Multi-head attention provides a richer representation of the input data.

11. Model training and text generation

Once the model architecture is defined, training and text generation follow:

- Training: Feed batches of data into the model, compute the loss (the difference between predicted and actual tokens), and update model weights using an optimizer. This process iteratively improves the model’s performance.

- Text generation: After training, generate text by providing a prompt, predicting subsequent tokens, and sampling from the probability distribution to produce coherent and contextually relevant text sequences.

Each of these steps is critical to successfully building, training, and deploying a GPT model. From importing libraries to model training and text generation, these processes ensure that the model is effectively trained and ready to handle various text-based tasks.

How to train an existing GPT model with your data?

The previous segment provided an introduction on how to construct a GPT model from the ground up. Now, let’s delve into the process of enhancing a pre-existing model using your unique data. This is known as ‘fine-tuning’, a process that refines a base or ‘foundation’ model for specific tasks or datasets. OpenAI offers a range of foundation models that one can leverage, with GPT-NeoX being a notable example. If you are interested in fine-tuning GPT-NeoX with your data, the following steps will guide you through the process.

Pre-requisites

There are some environmental setup required for GPT-NeoX as well dependencies to be set prior to using the model. Here are the details –

1. Host setup

- Python version: Ensure that your environment uses Python 3.8. While Python 3.9 may work, the GPT model’s codebase is primarily designed and tested with Python 3.8 for compatibility and stability.

- Dependencies: Install the necessary dependencies by setting up a virtual environment and using a requirements file to avoid conflicts with other libraries. This typically involves installing PyTorch and other related packages.

- Special libraries: For improved performance, especially on certain GPU architectures, additional libraries like Flash-Attention might be utilized. Ensure you install these as specified and adjust your configuration accordingly.

- Choosing an environment isolation tool: Use an environment isolation tool such as Anaconda or a virtual machine. This practice helps prevent conflicts between different libraries and ensures a clean, reproducible setup. This is especially important when working with customized versions of libraries like DeepSpeed.

2. Containerized setup

- Docker: For isolated execution, you can use Docker. Create a Docker image from the provided Dockerfile and run the container, ensuring it has access to the necessary GPU resources.

- Pre-built images: Alternatively, use pre-constructed Docker images from a repository like Docker Hub, which may simplify the setup process.

3. Configuration

- Configuration files: The GPT model operates based on parameters defined in YAML configuration files. These files dictate various settings such as parallelism, batch size, and optimization.

- Adjustments: Depending on your specific hardware and training requirements, you might need to adjust parameters such as the size of the model parallelism, batch sizes, and optimization settings. Refer to the configuration documentation for detailed descriptions of each parameter.

4. Data preparation

- Tokenization: Prepare your text data by tokenizing it using a suitable tokenizer. Tokenization converts raw text into a format that the model can process.

- Formatting: The data should be formatted as a large JSONL file, where each line represents a separate document with a single JSON key for the text.

- Pre-tokenization: Use preprocessing scripts to convert your dataset into a binary format that is compatible with the GPT model. This step includes specifying the tokenizer type and providing necessary files like vocabularies and merge files.

5. Training

- Training script: Use the training script provided with the model to begin the training process. This script will leverage DeepSpeed or a similar framework to manage the training across multiple GPUs or nodes.

- Configuration: Supply configuration files that define the model architecture, training parameters, and data paths. Ensure that the scripts are executed on the appropriate hardware setup defined in your configuration.

- Execution: Launch the training process, which will distribute the workload across the available GPUs or nodes. This step involves initializing the model, loading data, and performing iterative updates to the model’s weights based on the training data.

6. Fine-tuning

- Fine-tuning: After initial training, you may fine-tune the model on specific tasks or datasets. This involves further training the model on a narrower dataset to adapt it for particular applications.

7. Evaluation

- Evaluation script: Use the evaluation script to assess the performance of the trained model. This involves running the model on evaluation tasks to measure its effectiveness in generating or understanding text.

- Metrics: Compare the model’s outputs against predefined benchmarks or tasks to evaluate its performance. Adjustments may be necessary based on evaluation results.

8. Generating text

- Sampling: Once training is complete, you can use the model to generate text based on input prompts. This involves running the model in inference mode to produce new text sequences.

9. Iterate and improve

- Refinement: Based on the generated outputs and evaluation results, you may need to iterate on the training process. This could involve retraining with adjusted parameters, additional data, or improved configurations.

Training a GPT model involves setting up a compatible environment, configuring model parameters, preparing and processing data, executing training processes, and evaluating the model’s performance. Each step is essential for building a robust and effective language model capable of generating high-quality text.

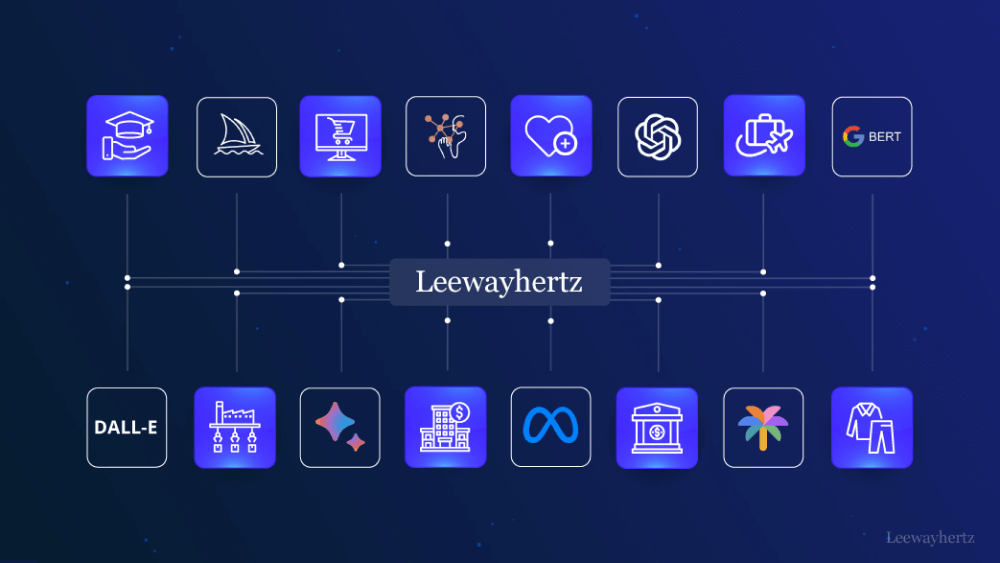

Leverage LeewayHertz’s AI development services to build a GPT model

LeewayHertz offers specialized GPT model development services, catering to the unique needs of businesses. LeewayHertz’s approach is multi-faceted and tailored to ensure businesses fully leverage the potential of AI. Here are some services that LeewayHertz offers to businesses willing to leverage GPT models:

Generative AI consulting

LeewayHertz provides expert consulting services to help businesses strategize the adoption of GPT models in line with their goals. Their profound technical expertise extends to foundational models and the broader spectrum of generative AI, enabling them to meticulously craft solutions that precisely meet clients’ requirements in accordance with their unique use cases.

Data analysis for GPT models

LeewayHertz excels in data analysis, a critical step in GPT model development. Whether dealing with structured datasets or unstructured text, our analysts are adept at extracting and processing data to uncover insights. This process is vital for training and refining GPT models to ensure they deliver accurate and relevant results.

Custom GPT model development

Recognizing the diverse needs of different industries, LeewayHertz specializes in creating custom, domain-specific GPT models using clients’ proprietary data. This process involves assessing the client’s industry and objectives, selecting an appropriate foundational model, and fine-tuning it with proprietary data. This ensures the model is not only powerful but also directly aligned with the client’s business needs.

Development of GPT-based solutions

LeewayHertz uses foundational models like GPT-4, and GPT 3.5 Turbo to build innovative solutions such as chatbots, recommendation systems, and predictive tools. These solutions are intelligent, creative, and adaptable, designed to tackle complex challenges in various business contexts.

Integration into workflows

An essential part of our service is the seamless integration of GPT-based solutions into clients’ existing tech infrastructures. This ensures minimal disruption to ongoing operations, allowing businesses to benefit from AI advancements without hindering their current processes.

Ongoing upgrade and maintenance

Understanding the dynamic nature of technology, LeewayHertz offers continuous maintenance and upgrade services. This ensures that the custom solution remains cutting-edge, providing ongoing value and innovation to keep businesses competitive.

LeewayHertz’s comprehensive approach in building GPT models involves in-depth consultation, specialized data analysis, custom model development, innovative solution creation, seamless integration, and ongoing support. This holistic approach ensures that businesses can effectively harness the power of generative AI to meet their specific objectives and challenges.

Things to consider while building a GPT model

Building a GPT model is a complex process that involves several key considerations to ensure the model is powerful, ethical, and safe. Here are the essential factors to keep in mind:

Addressing bias and toxicity

Training generative AI models like GPT on vast internet datasets can lead to the inclusion of biased or toxic content. To address this:

- Filter training datasets: Actively remove harmful content to minimize bias and toxicity, leading to more neutral and fair outputs.

- Implement watchdog models: Use additional models to monitor and filter the output in real-time, promptly detecting and addressing inappropriate content.

- Leverage first-party data: Utilize customized data to train and fine-tune models for specific use cases, enhancing performance and reducing biases by focusing on high-quality, relevant data.

Reducing hallucination

GPT models sometimes produce convincing but factually incorrect outputs, known as “hallucination.” To mitigate this issue:

- Data augmentation: Use diverse and robust datasets for training, increasing the likelihood of accurate responses.

- Adversarial training: Train models to recognize and correct incorrect outputs, refining the model’s ability to discern factual information.

- Improved model architectures: Develop advanced model structures to improve accuracy and reliability.

- Human evaluation: Regularly review and refine outputs through human oversight to ensure the highest possible accuracy.

Preventing data leakage

Protecting sensitive information is crucial to prevent it from being unintentionally incorporated into the model and resurfacing later. Key steps include:

- Transparent policies: Establish and enforce clear guidelines to prevent sensitive data from being used in training, ensuring data privacy and security.

- Vigilance: Continuously monitor and safeguard against potential data leakage risks, maintaining trust and integrity.

Integrating queries and actions

Future generative models will evolve from static responses to dynamically interacting with external sources. This development involves:

- External source integration: Enable models to query databases or search engines in real-time, providing up-to-date and relevant information.

- Trigger actions: Allow models to initiate actions in external systems, transforming them into interactive and connected interfaces. This capability opens up new use cases and enhances user experiences.

Ensuring privacy and safety

Prioritize user privacy and safety by:

- Protecting user data: Implement robust data protection measures to safeguard user information.

- Secure interactions: Ensure all interactions are secure to prevent unauthorized access or misuse.

- Continuous monitoring: Regularly check the model’s performance to prevent unintended outputs or interactions, maintaining a high standard of safety.

Compliance with usage policies

Adhering to OpenAI’s usage policies is essential to ensure ethical and legal use of GPT technology. This involves:

- Understanding policies: Familiarize yourself with OpenAI’s guidelines to ensure compliance.

- Operating within boundaries: Ensure your GPT models comply with these policies to maintain ethical and responsible use, fostering a positive impact and avoiding potential legal issues.

Building a GPT model requires careful consideration of ethical, technical, and practical aspects. By focusing on eliminating bias and toxicity, improving factual accuracy, and preventing data leakage, you can develop a powerful and responsible AI model. Incorporating dynamic interactions, ensuring privacy and safety, and complying with usage policies further enhance the model’s effectiveness and ethical standards. These considerations are crucial in harnessing the full potential of generative AI while minimizing risks and promoting positive outcomes.

The future of custom GPTs

As we look ahead, the development and application of custom GPTs are set to become even more transformative. Here’s a glimpse into what the future holds for this technology:

Advancing capabilities

- Enhanced natural language processing (NLP): Future GPT models will feature more refined NLP abilities, allowing them to understand and generate text with greater accuracy and relevance. Expect improvements in context recognition and response generation that push the boundaries of what these models can achieve.

- Nuanced emotional intelligence: As emotional understanding becomes more sophisticated, GPTs will be able to interpret and respond to user emotions more effectively. This enhancement will enable more empathetic interactions and tailored responses, enriching user experiences.

Community-driven innovation

- The role of the GPT store: The GPT Store is becoming a hub for innovation, where creators and developers can showcase their custom GPTs, share knowledge, and build upon each other’s work. This collaborative platform will drive rapid advancements and offer new opportunities for enhancement and customization.

- Crowdsourced development: Community contributions will continue to shape the evolution of GPT technology. By engaging with the broader GPT community, businesses gain access to emerging ideas, tools, and best practices that can further refine and elevate the custom GPT projects.

Integration and interactivity

- Dynamic integrations: Future GPT models will seamlessly integrate with a wide range of applications and systems. Expect custom GPTs to interact with databases, APIs, and other technologies in real time, enhancing their utility and versatility.

- Interactive capabilities: The ability for GPTs to perform real-time actions based on user interactions will become more prevalent. This evolution will expand the range of applications for custom GPTs, from automated decision-making to interactive storytelling.

Continuous learning and adaptation

- Ongoing improvement: Custom GPTs will benefit from continuous learning and adaptation. Future models will be able to incorporate new data and insights more efficiently, allowing them to stay current with changing trends and user needs.

- Personalization: As models become more adept at understanding individual preferences and contexts, they will offer increasingly personalized experiences, enhancing their effectiveness and user satisfaction.

The future of custom GPTs is brimming with possibilities, driven by advancements in technology, community collaboration, and a focus on ethical development. Embracing these changes will enable businesses to harness the full potential of custom GPTs and stay ahead in the rapidly evolving landscape of AI.

Endnote

GPT models are a significant milestone in the history of AI development, which is a part of a larger LLM trend that will grow in the future. Furthermore, OpenAI’s groundbreaking move to provide API access is part of its model-as-a-service business scheme. Additionally, GPT’s language-based capabilities allow for creating innovative products as it excels at tasks such as text summarization, classification, and interaction. GPT models are expected to shape the future internet and how we use technology and software. Building a GPT model may be challenging, but with the right approach and tools, it becomes a rewarding experience that opens up new opportunities for NLP applications.

Want to get a competitive edge in your industry with GPT technology? Contact LeewayHertz’s AI experts to train a GPT model!

Start a conversation by filling the form

All information will be kept confidential.

FAQs

What is a GPT model?

What are the use cases of GPT models?

- Natural Language Processing (NLP):

- Enhancing information retrieval and sentiment analysis.

- Improving language understanding and context in various applications.

- User Interface (UI) design:

- Automating the creation of captions, titles, and interactive components in web development.

- Streamlining UI design processes and reducing development resource dependencies.

- Image recognition with computer vision:

- Excelling in image recognition tasks by identifying specific elements like faces, colors, and landmarks.

- Integrating seamlessly with computer vision systems for versatile applications.

- Customer support chatbots:

- Revolutionizing customer support with AI chatbots, providing instant and detailed responses.

- Simulating human-like conversations to enhance customer satisfaction and loyalty.

- Language translation:

- Accurate translation of text between various languages.

- Facilitating cross-cultural communication by capturing nuanced meanings and retaining original contexts.

- Code generation in software development:

- Streamlining code generation by understanding and producing programming language code.

- Speeding up the coding process and reducing errors for more efficient software development.

- Personalized education content:

- Generating personalized educational content, offering tailored tutoring and support.

- Explaining complex concepts and assisting learners with homework for a more effective learning experience.

- Creative writing assistance:

- Providing creative suggestions, overcoming writer’s block, and generating coherent stories or poems.

- Enhancing creativity and productivity for writers in various creative fields.

The mentioned use cases only scratch the surface of GPT models’ capabilities. The versatility of GPT extends to numerous other applications, and ongoing research and development continue to uncover new possibilities.

How can LeewayHertz’s proficiency in GPT model development help my business?

- Customized solutions:

- LeewayHertz can tailor GPT models to meet your specific business needs.

- Customization ensures that the model aligns with your industry, domain, and unique requirements.

- Industry-specific applications:

- LeewayHertz’s experience allows for the development of industry-specific applications using GPT models.

- This can include solutions for healthcare, finance, legal, customer support, and more.

- Improved efficiency:

- LeewayHertz can optimize GPT models for enhanced efficiency in specific business processes.

- Streamlining workflows and automating repetitive tasks can lead to significant time and cost savings.

- Data security and compliance:

- LeewayHertz is adept at implementing robust security measures and ensuring compliance with industry regulations.

- This is crucial for businesses handling sensitive data, such as healthcare or financial information.

- Integration with existing systems:

- LeewayHertz can seamlessly integrate GPT models with your existing systems and software.

- This ensures a smooth transition and compatibility with your current technology stack.

- Scalability and performance:

- LeewayHertz’s expertise includes optimizing GPT models for scalability and high performance.

- This is particularly important as your business grows and data processing requirements increase.

- User experience enhancement:

- LeewayHertz can enhance user experiences by incorporating GPT models in applications, chatbots, or virtual assistants.

- This can lead to improved customer engagement and satisfaction.

- Innovation and future-proofing:

- LeewayHertz can keep your business at the forefront of innovation by incorporating the latest advancements in GPT technology.

- This ensures that your solutions remain relevant and competitive in the long term.

Partnering with LeewayHertz for GPT model development can empower your business with cutting-edge AI capabilities, driving efficiency, innovation, and a competitive edge in your industry.

What tools and knowledge are essential for building a GPT model?

- A deep learning framework (e.g., TensorFlow or PyTorch) for model implementation and training.

- Abundant training data (e.g., text from books or articles) to train the model on language patterns.

- High-performance computing environment (e.g., GPUs or TPUs) to accelerate the training process.

- Data pre-processing and cleaning tools (e.g., Numpy, Pandas, or NLTK) to prepare training data.

- Evaluation tools (e.g., perplexity or BLEU scores) for measuring and improving model performance.

- NLP library (e.g., spaCy or NLTK) for tokenization and other NLP tasks on input data.

The knowledge required:

- Understanding of deep learning concepts, neural networks, and NLP.

- Familiarity with transformers, attention mechanisms, and optimization algorithms (e.g., stochastic gradient descent).

- Knowledge of pretraining and generative models to enhance GPT model performance.

- Proficiency in programming languages such as Python (with TensorFlow, PyTorch, Numpy), R, or Julia.

To build a GPT model, it’s crucial to have a deep understanding of concepts like neural networks, NLP, and optimization, along with proficiency in a programming language suitable for deep learning and AI applications. Clearly, building a GPT model demands significant resources, making it a smart choice to enlist the expertise of a professional service provider. Let LeewayHertz take the lead in crafting a tailored GPT model for your needs.

What data privacy and security considerations should be taken into account when integrating a GPT model into enterprise operations?

How does implementing a GPT model contribute to enhancing interactions and streamlining processes within an enterprise?

Can LeewayHertz train an existing GPT model with my proprietary data to make it relevant to my industry and business?

How can LeewayHertz assist businesses in choosing the right GPT model based on their unique needs and preferences?

- What is a GPT model?

- Overview of GPT models

- Use cases of GPT models

- Advantages of building GPT models

- Working mechanism of GPT models

- How to choose the right GPT model for your needs?

- Prerequisites to build a GPT model

- How to create a GPT model? - Steps for building a GPT model

- How to train an existing GPT model with your data?

- Leverage LeewayHertz's AI development services to build a GPT model

- Things to consider while building a GPT model

- The future of custom GPTs

- Contact us